Here’s the uncomfortable truth that most localization vendors aren’t saying out loud: AI dubbing isn’t arriving—it’s already here, it’s already winning contracts, and the studios commissioning it don’t need your permission.

The question for every localization company and studio executive reading this in 2026 isn’t whether to engage with synthetic voice technology. It’s whether you understand it well enough to position your business on the right side of what’s coming next.

The numbers set the context. The global AI dubbing software market is valued at $1.16 billion in 2026 and projected to reach $3.66 billion by 2035—a 14.2% compound annual growth rate that puts it among the fastest-expanding segments in the entire entertainment supply chain. Meanwhile, the broader film dubbing market sits at $4.66 billion in 2026 and is expected to reach $8.79 billion by 2035. Two parallel markets, growing simultaneously, pulling in opposite directions—one automating the workflow, one expanding the total demand. That tension is exactly where the strategic opportunity lives.

For localization vendors, the Fragmentation Paradox is at work in a new way. There are now dozens of AI dubbing platforms, voice synthesis tools, and hybrid service providers—each claiming the most emotionally authentic output, the fastest turnaround, and the broadest language coverage. Studios trying to source the right localization partner for a 20-language simultaneous global release face the same information asymmetry that producers face when sourcing VFX vendors: too many options, too little verified intelligence, and a very narrow window to make the right call before the distribution slot closes.

This guide breaks down exactly where AI dubbing and voice synthesis stand in 2026, what it means operationally for vendors and studios, where the human-in-the-loop model actually wins, and how to de-risk your technology sourcing decisions in a market that’s moving faster than most trade coverage can keep up with.

Table of Contents

- The Market Reality: Two Growth Curves, One Disruption

- How AI Dubbing Actually Works in 2026

- Streaming Is the Catalyst—But Not the Whole Story

- The Vendor Landscape: DeepDub, Respeecher, Papercup, and Who’s Actually Leading

- Where Human-in-the-Loop Still Wins—and Why It Always Will

- What This Means for Studios: Speed, Scale, and the Global Release Window

- What This Means for Localization Vendors: Adapt or Commoditize

- Ethics, Talent Rights, and the Consent Problem

- Sourcing the Right AI Dubbing Partner: What Smart Buyers Check

- FAQ

- Conclusion

Find Verified AI Dubbing and Localization Partners Before Your Release Window Closes

Netflix, Warner Bros, and Paramount use Vitrina to track 140,000+ companies across the global entertainment supply chain. Ask VIQI—our AI research engine—to surface verified localization vendors and AI dubbing platforms matched to your project’s language, timeline, and volume requirements.

✔ Free to explore | ✔ No credit card needed | ✔ Real-time vendor intelligence

The Market Reality: Two Growth Curves, One Disruption

Let’s separate what’s actually happening from the noise. The video localization market—when you include traditional dubbing, subtitling, and AI-assisted workflows combined—represents a market of roughly $4 to $6.5 billion depending on how you scope it. Anton Dvorkovich, CEO of Dubformer, has pegged the video localization addressable market at $6.5 billion, with media and entertainment, corporate video, gaming, and e-learning as the four primary demand pillars. That’s the total pie.

Within that, the specifically AI-powered dubbing software segment—the automated voice synthesis, neural translation, and lip-sync generation layer—sits at a smaller but explosively growing number: $1.16 billion in 2026, projected at $3.66 billion by 2035 at 14.2% CAGR. That growth rate doesn’t describe incremental improvement. It describes a fundamental workflow replacement happening in real time.

But here’s what the growth projections don’t show you: the two curves aren’t canceling each other out. Traditional high-quality dubbing—prestige drama, theatrical release, franchise content where emotional authenticity is non-negotiable—isn’t shrinking. Over 60% of viewers globally prefer dubbed content over subtitled, and that preference is driving new demand for dubbing in previously undertreated language markets. AI isn’t eliminating the need for quality localization. It’s expanding total volume to a scale that human-only workflows could never cost-effectively serve.

The disruption is in the middle: commodity localization of high-volume unscripted content, back-catalog library refreshes, FAST channel programming, and content that’s been sitting unleveraged because the economics of traditional dubbing made multi-language release prohibitive. That’s where AI is winning decisively—and where localization vendors who haven’t adapted their service architecture are already losing revenue.

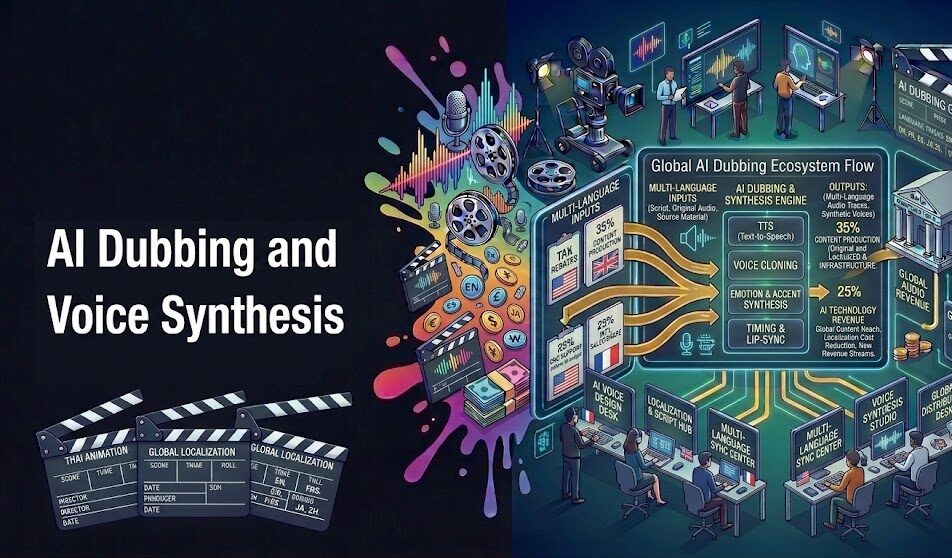

How AI Dubbing Actually Works in 2026

The 2026 generation of AI dubbing isn’t what most people picture when they imagine synthetic voice: robotic, flat, uncanny. That was 2021. The current state involves several distinct technical layers that, when stacked correctly, produce output that’s becoming genuinely competitive with mid-tier human dubbing—at a fraction of the cost and in a fraction of the time.

Neural voice synthesis is the foundation—large language models trained on vast voice datasets that can replicate pitch, cadence, accent, and increasingly emotion. The critical advance in 2025-2026 is the integration of emotion recognition and transfer: systems that don’t just translate words but analyze the emotional register of the source performance and attempt to preserve it in the dubbed output. Ofir Krakowski, CEO and Co-Founder of DeepDub, has positioned their company specifically around this emotional authenticity problem—building what they call an “emotional AI voice stack” that goes beyond linguistic accuracy into performance fidelity.

Lip-sync generation is the second layer—and it’s where 2026 differs most sharply from even two years ago. Modern AI dubbing doesn’t just swap audio; it uses generative models to alter the on-screen performer’s facial geometry to match the dubbed dialogue. The term the industry has adopted is visual dubbing: the audio and the visible mouth movement are both AI-generated, synchronized to the translated script. The results aren’t perfect—subtle artifacts still appear in long close-up sequences with complex phoneme patterns—but they’re collapsing fast.

Voice cloning is the third layer, and the most commercially valuable—and the most ethically contested. When actors consent and studios contract properly, voice cloning allows a star’s voice to be synthesized across 20+ language versions while retaining the specific tonal signature audiences associate with that performer. Alex Serdiuk, co-founder and CEO of Respeecher, has built an entire business around this technology’s entertainment applications—including award-winning projects that used Respeecher’s synthetic voice replication to restore or preserve performer voices that would otherwise be unavailable. The tech’s role in content localization and creative storytelling has expanded dramatically since their early work in film restoration.

Taken together, these three layers—neural voice synthesis, visual dubbing, voice cloning—represent a genuine capability leap. And the workflows have compressed accordingly. Where traditional dubbing of a 45-minute episode across 10 languages could take 6-10 weeks, current AI-assisted hybrid pipelines are delivering the same scope in days to 2 weeks, with costs cut by 40–60% in hybrid workflows and as much as 90% in fully automated pipelines for lower-fidelity use cases.

Ofir Krakowski (CEO & Co-Founder, DeepDub) breaks down how emotional AI voice technology is transforming content localization—and what “seamless multilingual storytelling” actually requires at scale:

Streaming Is the Catalyst—But Not the Whole Story

Netflix, Amazon Prime, and Disney+ expanding into 100+ countries didn’t just create demand for localization—they fundamentally reset audience expectations. Viewers in emerging markets who might have been satisfied with subtitles five years ago now expect dubbed content. Korean drama exports grew 35% in 2023, with over 60% of that content dubbed for foreign markets. The RWS acquisition of Papercup’s AI dubbing IP in June 2025 was a direct signal from a major language services company that AI dubbing is no longer an experimental technology—it’s a capability that enterprise-scale localization firms need to own rather than outsource.

Amazon Prime has launched an AI dubbing pilot program for films and series in English and Spanish. Netflix resumed Russian-language dubbing at scale using AI technologies, processing what’s described as millions of minutes of content annually. Over 800 studios across Europe began experimenting with synthetic voice dubbing in 2023 alone—and that was before the current generation of emotional AI voice models reached the market.

But streaming is the loudest driver, not the only one. FAST channel operators—who need to monetize deep back-catalog libraries in emerging markets without the production budgets of a Netflix—have become one of the most aggressive adopters of AI dubbing. If you’ve got 5,000 hours of unscripted content sitting in your catalog that’s never been localized for Arabic, Hindi, or Portuguese because the traditional dubbing economics didn’t work, AI dubbing changes the entire calculus. That catalog becomes a revenue asset. Our overview of AI dubbing applications in filmmaking covers how both streamers and catalog owners are deploying these workflows in practice.

And there’s a demand signal from audiences that executives shouldn’t ignore: 65% of audiences globally prefer consuming content in their native language. In India, 77% of Gen Z viewers actively watch dubbed or translated content. Asia-Pacific leads global localization market share with 33% in 2026—and it’s the fastest-growing region. Any studio with distribution ambitions in APAC or MENA that isn’t actively solving its localization economics is making a choice to leave audience share and subscription revenue on the table.

The Vendor Landscape: DeepDub, Respeecher, Papercup, and Who’s Actually Leading

The AI dubbing vendor landscape in 2026 is genuinely fragmented—which is both an opportunity and a problem for studios trying to source the right partner. There’s no dominant platform with an obvious lock-in position. Instead, you’ve got a spectrum of technical approaches, target market segments, and quality tiers that require real diligence to navigate.

DeepDub (Ofir Krakowski) has differentiated on emotional fidelity—their argument being that most AI dubbing fails not at the language level but at the performance level, generating technically accurate translations that sound nothing like how a human actor would deliver the same line. Their “emotional AI voice stack” architecture attempts to preserve the emotional signature of the source performance across languages. They’ve built specific capabilities around live dubbing—particularly relevant for sports, unscripted programming, and live events where traditional dubbing is operationally impossible.

Respeecher (Alex Serdiuk) has taken a different path—positioning as a voice replication specialist rather than a full localization pipeline. They’re the company that works on award-winning projects where the requirement is high-fidelity recreation of a specific performer’s voice—which might mean a historical figure, a deceased actor, or a talent whose voice needs to be aged or de-aged for a narrative role. Their localization application is voice consistency at scale: ensuring that a recognizable star’s dubbed versions feel like that performer across every language market.

Papercup (Abhirukt Sapru, SVP Commercial) built their business specifically around media and entertainment dubbing—the explicit claim being that AI can reproduce a speaker’s tone, pace, and emotion faithfully enough for broadcast-quality output. RWS acquiring Papercup’s IP in June 2025 was effectively the traditional language services industry acknowledging that this technology works well enough to compete for the contracts they’ve historically owned. It’s a significant market signal. TransPerfect, through its APAC operations led by Asher Loy (CBO), has been integrating AI capabilities into its broader localization offering for exactly the same reason—the enterprise-scale LSPs know they need to offer AI-augmented workflows or lose volume contracts to platforms that do.

Dubformer (Anton Dvorkovich) occupies the mid-market—designed specifically for video creators and mid-tier content producers who need affordable multi-language localization at speed. Their stated market addresses the $6.5 billion video localization opportunity not by competing for prestige drama contracts but by making AI dubbing economically accessible for producers who previously couldn’t justify the cost of traditional dubbing at all.

ElevenLabs leads on voice cloning technology breadth—their models support 150+ languages with 1,000+ voices and have 50+ million users globally—though their market positioning is broader than entertainment-specific applications. And Iyuno remains one of the largest enterprise-level localization providers, with deep studio relationships and a global infrastructure that AI-native startups don’t yet match for high-volume, high-reliability delivery. Our detailed breakdown of localization companies serving filmmakers maps the full vendor landscape across AI-native, hybrid, and traditional providers.

Discover Verified AI Dubbing Vendors With Real Project Track Records

Trusted by Netflix, Warner Bros, and Paramount. Join 140,000+ companies using Vitrina to discover and vet localization and AI dubbing vendors—complete with verified credits, project history, and current capacity data.

✔ 200 free credits | ✔ No credit card required | ✔ 400,000+ projects tracked

Where Human-in-the-Loop Still Wins—and Why It Always Will

The fully-automated AI dubbing pitch is seductive in a slide deck. In production reality, it’s where quality problems compound. And the industry—including the AI companies themselves—increasingly knows it. The emerging consensus across serious localization operators isn’t “AI replaces human dubbing” but “AI handles initial drafts and scale, humans handle cultural fidelity and emotional precision.”

There are specific categories of content where the human-in-the-loop model isn’t a quality preference—it’s a contractual and competitive requirement. Prestige scripted drama—the kind of content that drives platform subscriptions and awards conversation—demands voice performance that carries subtext, cultural idiom, and character-specific tics that current AI models flatten. You can AI-dub a reality show; you can’t AI-dub The Crown for a German theatrical audience and expect the result to hold up under critical scrutiny. That’s not a technology limitation that’s disappearing in the next 18 months.

Cultural adaptation is the second persistent limit. Translation and dubbing aren’t the same thing—and linguistic AI models have been much faster to solve the former than the latter. Idiom, cultural reference, humor register, and regional dialect involve judgment calls that require native-speaking human oversight. As Asher Loy, CBO at TransPerfect APAC, has articulated directly: AI’s transformative role in localization doesn’t eliminate the human expertise required to navigate cultural nuance in Asia’s diverse markets. The tool is a multiplier, not a replacement.

The hybrid model—AI generates the draft, human reviewers and voice directors refine—is demonstrably delivering the best business outcomes in 2026. 40–60% cost reduction and turnaround compression from weeks to days, while maintaining the quality floor that broadcast-standard content requires. This is where localization vendors who’ve invested in AI-augmented workflows are genuinely outcompeting those who’ve gone either fully automated or stayed fully manual. Their pricing is competitive with the AI-only platforms but their quality is significantly higher—which is a position that’s hard to attack from either direction.

“AI’s transformative role in localization doesn’t eliminate the human expertise required to navigate cultural nuance. The tool is a multiplier—blending innovation and collaboration is how you shape the future of global media.

— Asher Loy, Chief Business Officer, TransPerfect APAC (Vitrina LeaderSpeak)

What This Means for Studios: Speed, Scale, and the Global Release Window

For studio executives, the localization question in 2026 isn’t “should we use AI dubbing?” It’s “which content tiers should be AI-led, which should be hybrid, and which should remain fully human—and are our vendor contracts structured to deliver all three?”

The simultaneous global release model that streaming platforms have made standard creates a localization bottleneck that traditional dubbing workflows simply can’t clear. A 10-episode drama releasing across 15 language markets on the same date requires either a massive upfront investment in parallel human dubbing production—which was historically reserved for major franchise titles—or a hybrid AI workflow that can compress the timeline. The economics are increasingly dictating the latter for everything except the top-tier tentpole releases.

But studios have a sourcing problem that mirrors the broader Fragmentation Paradox. There are now dozens of AI dubbing platforms, hybrid localization firms, and traditional vendors offering “AI-augmented” services without much transparency about what that actually means in practice—what models they’re using, what the human review process involves, what quality benchmarks they’re measured against, and whether their technical infrastructure can handle your volume at your timeline without degradation. 15,000+ hours of content are dubbed monthly across the global streaming ecosystem. Choosing the wrong vendor for a high-profile release isn’t just a quality problem—it’s a reputational exposure that hits before the content earns a single subscriber.

Smart buyers are now building what amounts to a tiered vendor architecture: one or two premium human-led studios for flagship prestige content, a certified hybrid platform for mid-tier volume, and an AI-native tool for catalog refreshes and FAST channel library expansion. That’s not three vendors—it’s a localization strategy. And the studios that have formalized it are shipping faster and spending less per hour of localized content than those still making vendor decisions on a project-by-project basis. Our analysis of AI in the entertainment supply chain explores how this tiered approach is reshaping procurement across post-production and localization.

What This Means for Localization Vendors: Adapt or Commoditize

Let’s be direct: the localization vendors who are going to struggle aren’t the ones who can’t do AI dubbing. They’re the ones who’ve defined their value proposition entirely around language services at a unit price, and haven’t invested in the differentiated capabilities that AI can’t replace. That’s a structural positioning problem, not a technology problem.

The Fragmentation Paradox cuts both ways in the localization market. On the demand side, studios are now drowning in vendor options and struggling to evaluate them. On the supply side, localization vendors who’ve historically won contracts through relationship networks and festival presence are discovering that studios with AI sourcing intelligence—or access to platforms like Vitrina that surface verified vendor track records across 400,000+ projects—are no longer defaulting to familiar names just because they’re familiar. The relationship advantage is eroding. The capability transparency is increasing.

Vendors who are thriving in this environment are doing three things. First, they’re owning a specific quality tier rather than trying to compete across all of them. The vendors trying to sell themselves as both premium prestige dubbing studios and high-volume AI platforms are confusing buyers and underdelivering against both standards. Pick your lane and be excellent in it.

Second, they’re investing in proprietary AI tooling or certified platform partnerships—specifically to reduce turnaround times without sacrificing the cultural adaptation and quality review processes that justify their pricing premium over the pure-AI platforms. A traditional dubbing studio that’s integrated DeepDub’s emotional AI layer into its review workflow is fundamentally more competitive than one that’s doing everything manually. The hybrid isn’t a compromise—it’s the product.

Third—and this is the one most vendors are missing—they’re making themselves discoverable with verified capability data, not just promotional claims. Studios using Vitrina’s platform can surface localization vendors by language specialization, project type, studio client history, and verified delivery track record. A vendor whose capabilities are opaque to the platform’s intelligence—no verified credits, no project history, no client validation—is invisible to a growing proportion of the commissioning market. In 2026, being findable matters as much as being capable. Our guide on how localization vendors are adopting AI workflows covers the operational shifts happening across the sector.

Ethics, Talent Rights, and the Consent Problem

You can’t write about AI dubbing and voice synthesis in 2026 without addressing the consent question directly—because studios and vendors that ignore it are building legal exposure into every contract they sign. Voice cloning raises consent and intellectual property concerns that are only now being resolved in any jurisdiction with enforceable specificity, and the “authorized AI” frameworks that studios are developing aren’t optional courtesy—they’re becoming fundamental to completion bond requirements and distribution deal structures.

Respeecher has made ethical standards a core part of their business differentiation—explicit consent frameworks, clear IP ownership structures, and transparency with both performers and production companies about how synthetic voice models are trained and used. That’s not just ethics; it’s smart commercial positioning. As voice actors and their unions negotiate AI use provisions into talent agreements, vendors who’ve built consent infrastructure into their workflows have a structural advantage over those who haven’t.

The AI voice cloning misuse risk—deepfakes, unauthorized voice replication, disinformation applications—is real and has already generated regulatory attention in several jurisdictions. Studios sourcing AI dubbing partners need to verify not just the quality of the voice synthesis but the provenance of the training data and the consent documentation for any proprietary voice models being used in production. As Deadline has reported extensively, AI voice and likeness rights are now among the most actively negotiated terms in SAG-AFTRA and other talent agreements—meaning the legal landscape around what your vendor can legally deliver is actively shifting. And as noted by Variety, studios that build consent infrastructure into their localization workflows early are better positioned for the regulatory environment taking shape across the EU and several US states.

Sourcing the Right AI Dubbing Partner: What Smart Buyers Check

This is where the data deficit costs you real money. The localization vendor market is fragmented across hundreds of companies—ranging from AI-native startups with 18 months of operational history to enterprise-scale LSPs with global studio infrastructure. Choosing wrong on a 15-language release project isn’t recoverable mid-production. Here’s what the due diligence checklist should actually include in 2026.

Technical stack transparency is the starting point. Which AI voice synthesis models are they using—proprietary, licensed, or open-source? What does their visual dubbing pipeline actually involve? Is the lip-sync output AI-generated or overlaid in post? How many languages does their model support natively versus via third-party translation layers? These aren’t hostile questions; any serious vendor will answer them in a first meeting. If they won’t, that tells you something.

Project history at your content type is the second check. A vendor that’s done 500 hours of corporate training localization may have very different quality benchmarks than one that’s done premium scripted drama for a major streamer. Ask for verified credits—not testimonials—in the specific content category you’re commissioning. The Vitrina platform surfaces this directly: 140,000+ companies mapped with verified project histories across the supply chain, so you’re not relying on what vendors self-report in their capability decks.

Consent and compliance documentation is non-negotiable. Get the vendor’s voice talent consent framework in writing before you sign anything. Understand who owns the synthetic voice model being used, whether the training data was ethically sourced, and what your studio’s liability exposure is if a talent rights claim emerges post-delivery. This is no longer a nice-to-have—it’s a material contract term.

Quality review process specificity matters more than the AI capabilities claimed. Ask: how many human reviewers touch each hour of output? What’s the native-speaker review process for cultural adaptation? What QC benchmarks define “broadcast ready” for this vendor? The vendors doing this well have answers that are specific and documented—not vague commitments to “quality assurance” that dissolve when you’re chasing a delivery.

Accelerate Your Localization Vendor Search With Verified Intelligence

Join 140,000+ companies—including Netflix, Warner Bros, and Paramount—who use Vitrina to discover and vet AI dubbing and localization vendors with verified credits and real project history. Get full platform access with 200 free credits today.

✔ 200 free credits | ✔ No credit card required | ✔ 400,000+ projects tracked

Track Localization Vendors Now

Need direct vendor introductions for a complex multi-language project? Explore Concierge Service →

Frequently Asked Questions

What is AI dubbing and how does it differ from traditional dubbing?

AI dubbing uses neural voice synthesis, machine translation, and generative visual models to replace original dialogue with localized audio—without requiring human voice actors to record in a studio. Traditional dubbing involves human voice actors, studio recording sessions, script adaptation specialists, and audio engineers working sequentially. AI dubbing compresses or automates most of these stages, reducing cost by 40–90% and turnaround from weeks to days depending on the workflow and quality requirements. The key difference isn’t just cost and speed—it’s scalability: AI can generate 20-language versions simultaneously, where traditional dubbing produces them sequentially.

How large is the AI dubbing market in 2026?

The AI dubbing software market is valued at approximately $1.16 billion in 2026, projected to reach $3.66 billion by 2035 at a 14.2% CAGR. The broader film dubbing market—which includes traditional and AI-assisted workflows combined—sits at $4.66 billion in 2026 and is expected to reach $8.79 billion by 2035. The total video localization market, including subtitling, translation, and all dubbing methods, is estimated at $6.5 billion by some analysts. These figures reflect both the growth of AI adoption and the underlying demand expansion driven by global streaming platform growth.

What is voice synthesis and how is it different from voice cloning?

Voice synthesis is the AI generation of speech from text—creating a voice output without a specific target voice model to replicate. It produces a believable human voice but not one associated with a specific performer. Voice cloning takes a specific individual’s voice as the training input and generates new speech that matches that person’s particular tonal signature, cadence, and speech patterns. In entertainment localization, voice cloning is most valuable when a recognizable star’s voice needs to be consistent across language markets or when historical or unavailable performer voices must be recreated for narrative purposes. Voice cloning requires explicit consent documentation and raises IP questions that voice synthesis does not.

Which content types are best suited to AI dubbing in 2026?

AI dubbing performs best on: high-volume unscripted content (reality, documentary, factual programming), back-catalog library refreshes for FAST channel distribution, corporate training and e-learning localization, and social media/short-form creator content. It’s least suited—and most likely to fall below broadcast quality without significant human review—on prestige scripted drama where subtle emotional performance, cultural idiom, and star vocal identity matter to audience experience and platform positioning. The practical framework: if the content’s value proposition is primarily informational or entertainment-at-scale rather than deep emotional engagement with specific performers, AI dubbing is competitive. If it’s character-driven prestige content, a hybrid human-in-the-loop model delivers better results.

What are the main AI dubbing companies active in entertainment in 2026?

The key players in entertainment-focused AI dubbing include: DeepDub (emotional AI voice stack, live dubbing capabilities), Respeecher (high-fidelity voice replication for prestige and IP-sensitive applications), Papercup (media and entertainment AI dubbing, now under RWS following June 2025 acquisition), Dubformer (mid-market AI localization platform), and ElevenLabs (broad voice cloning platform, 150+ languages). Enterprise-scale LSPs including Iyuno, TransPerfect, and ZOO Digital have integrated AI into their workflows alongside traditional studio capabilities. The market remains fragmented with no dominant platform across all use cases.

How should studios evaluate AI dubbing vendors in 2026?

Studios should evaluate AI dubbing vendors on five dimensions: (1) Technical stack transparency—which models, what training data provenance, what visual dubbing pipeline; (2) Verified project history in your specific content type, not just general localization claims; (3) Consent and compliance documentation—explicit frameworks for voice talent rights and AI training data; (4) Quality review process—how many human reviewers, what native-speaker cultural oversight, what QC benchmarks; (5) Volume and timeline capacity—verified ability to deliver your language count on your schedule without quality degradation. Platforms like Vitrina surface verified vendor track records across 400,000+ projects, enabling data-driven selection rather than relationship-dependent referrals.

Will AI dubbing replace traditional localization vendors entirely?

Not entirely—but it will eliminate the traditional vendors who’ve defined their value as “we record voice actors in a studio and charge per hour.” The segments that remain irreplaceable for human-led localization are prestige drama, theatrical-release features, and any content where cultural adaptation requires deep native-market expertise that AI models don’t reliably replicate. What AI is replacing—and replacing decisively—is high-volume commodity dubbing of content where cost and speed matter more than emotional subtlety. The vendors who will thrive are those who’ve positioned in the hybrid model: AI-generated initial output with human cultural review and quality oversight. That combination is currently more cost-competitive than fully human workflows and significantly higher quality than fully AI pipelines.

Which regions are driving the most growth in AI dubbing demand?

Asia-Pacific leads localization market share at 33% in 2026 and is the fastest-growing region, driven by massive streaming adoption in India, Southeast Asia, and continued Korean content export growth (Korean drama exports grew 35% in 2023). North America follows at 31%, driven by major streaming platforms integrating AI dubbing into their global release workflows. MENA is the most rapidly expanding emerging market for dubbing demand, driven by Arabic-language content volume growth and Saudi/UAE streaming investment. Latin America shows strong early adoption—particularly for telenovela localization and Portuguese-market content. The highest-value language targets for new AI dubbing investment are Hindi, Arabic, Portuguese, Indonesian, and Mandarin.

Conclusion: AI Dubbing Isn’t Disrupting Localization—It’s Expanding It

The frame most coverage uses for AI dubbing is disruption—technology destroying an existing business model. That’s not quite right. What’s actually happening is more interesting: AI is expanding the total addressable market for localization by making multi-language content economically viable for content tiers and catalog volumes that could never justify traditional dubbing costs. The demand is genuinely new. And the competition for that demand—between AI-native platforms, hybrid vendors, and traditional studios—is the market event that’s reshaping the entire sector.

Key Takeaways:

- The AI dubbing market is $1.16 billion in 2026 at 14.2% CAGR—within a broader $4.66 billion film dubbing market that’s itself growing. Both curves are up; the mix between AI and human is shifting.

- Streaming is the demand catalyst: Simultaneous global releases across 15+ language markets have created a localization bottleneck that traditional workflows can’t clear at scale or cost.

- The hybrid model wins in 2026: AI initial output + human cultural review delivers 40–60% cost reduction and dramatic timeline compression while maintaining broadcast-quality output for mid-tier content.

- Prestige drama stays human-led: Character-driven premium content where emotional fidelity and cultural nuance matter requires human voice performance and native-speaking cultural oversight that AI doesn’t yet reliably replicate.

- Consent infrastructure is non-negotiable: Voice cloning raises IP and talent rights issues that must be resolved contractually before production—not after delivery. Vendors who’ve built consent frameworks into their workflows have a structural compliance advantage.

- Sourcing intelligence is the competitive edge: The localization vendor market is as fragmented as the broader supply chain. Studios and vendors alike need verified capability data—not festival contacts—to make decisions that hold up under production pressure.

The vendors who thrive in 2026’s AI dubbing landscape won’t be the ones who went fully automated or stayed fully manual. They’ll be the ones who built the hybrid architecture, invested in consent compliance, differentiated on cultural expertise, and made their verified capabilities discoverable to studios who are sourcing smarter than they ever have before. Start with real intelligence. Start with Vitrina.

Build Your AI Dubbing Vendor Strategy With Verified Supply Chain Intelligence

Netflix, Warner Bros, and Paramount use Vitrina to track 400,000+ projects and 140,000+ companies—including AI dubbing platforms, hybrid localization vendors, and traditional dubbing studios worldwide. Get full platform access with 200 free credits. No credit card, no commitment.

✔ 200 free credits | ✔ No credit card required | ✔ Cancel anytime