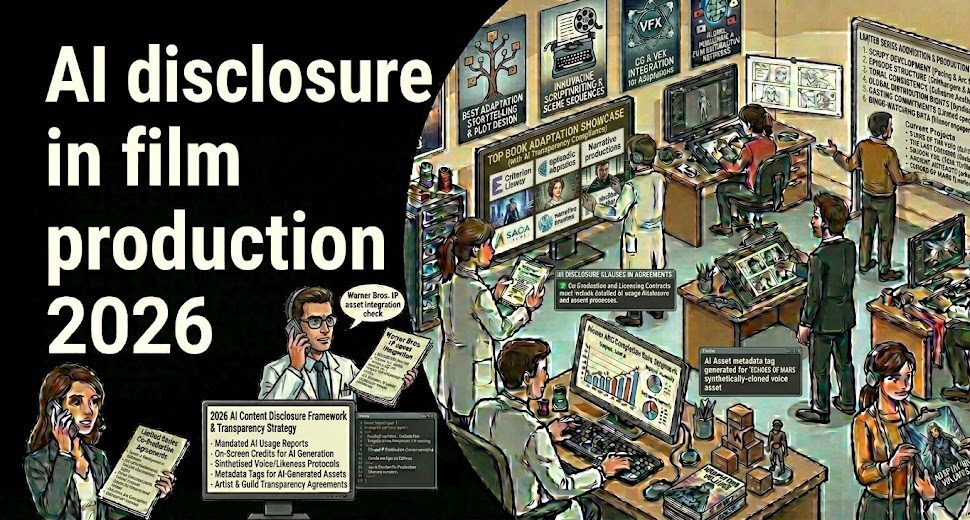

The moment you greenlight a production using AI tools in 2026, you’ve triggered a disclosure obligation. Possibly several, simultaneously, from overlapping regulatory frameworks that don’t always agree with each other.

AI disclosure in film production has gone from a theoretical compliance question to a practical production reality — one with direct consequences for your completion bond, your distribution deal, your guild relationships, and in some cases your awards eligibility.

Here’s the thing: most producers are behind on this. Not because they’re ignoring it — but because the disclosure landscape is genuinely fragmented. The WGA, SAG-AFTRA, the Academy of Motion Picture Arts and Sciences, California’s AB 412, New York’s synthetic performer law (effective June 2026), and the EU AI Act’s transparency regime (kicking in August 2026) — each framework defines AI differently, requires different disclosures, and carries different consequences for non-compliance. Your entertainment attorney and your completion bond underwriter may be reading entirely different rulebooks.

This guide maps the disclosure landscape as it actually stands in 2026: what each framework requires, where the requirements conflict, and how the smartest producers are building Authorized AI™ governance structures that address all of them at once — instead of discovering gaps at the worst possible moment in the deal cycle.

Ask VIQI: Which AI Tools Are Guild-Compliant for Your Production?

Trusted by Netflix, Warner Bros, and Paramount teams. Get instant answers on AI disclosure requirements by production type, territory, and guild agreement — with sourced references.

✓ 200 free credits | ✓ No credit card required | ✓ Real-time intelligence

Why AI Disclosure in Film Production Became a Financial Issue, Not Just a Creative One

Let’s dispense with the philosophical framing. The 2025 Oscar season made AI disclosure a very concrete business question when The Brutalist‘s use of AI to modify Adrien Brody’s spoken Hungarian caused a wave of retroactive scrutiny — despite Brody winning Best Actor. The Academy subsequently ruled that AI-assisted films remain eligible for nominations, but simultaneously signaled movement toward mandatory AI disclosure requirements for the 2026 rules cycle, building on an optional disclosure form already in circulation. That’s the kind of regulatory environment where “we used AI tools in post” goes from a casual production note to a documented compliance obligation.

But the Academy is the least of your immediate concerns. The frameworks with the most direct financial bite are the guild agreements — specifically what SAG-AFTRA is preparing to demand in its TV/Theatrical contract negotiations, which open in 2026 with the current deal expiring in June. SAG-AFTRA’s National Executive Director Duncan Crabtree-Ireland has been explicit: the union didn’t get everything it wanted in 2023, and AI will be a central priority this cycle. The 2025 Commercials Contract already achieved something the 2023 TV/Theatrical deal could not — a contractual restriction on using SAG-AFTRA members’ work to train AI systems without union permission. That provision is coming for scripted film and TV next.

For producers with productions currently in development or pre-production, this means your AI tool selection decisions today will land inside a more restrictive contractual framework by the time you’re in post. And for financiers evaluating production packages, the question of how AI use has been documented and disclosed is becoming a legitimate underwriting variable — not a footnote.

WGA Disclosure Requirements: The Mutual Obligation Framework

The WGA’s 2023 Minimum Basic Agreement established a two-directional disclosure structure. Studios must disclose to writers when any material provided to them was generated by or incorporates AI output. Writers who choose to use AI tools in their work must obtain company consent and follow applicable company policies — but crucially, using AI doesn’t strip a writer of credit or authorship status. The resulting work is considered the writer’s literary material.

What that sounds like in practice is a relatively clean framework. What it actually creates is a documentation requirement that most productions haven’t operationalized. If your development team or showrunner is using AI tools to generate pitch materials, outline drafts, or scene breakdowns — even internally, even as a starting point — that usage needs to be disclosed to any WGA writers brought onto the project. The disclosure obligation doesn’t just cover production-stage writing. It applies to any AI-generated content that writers are given as part of their assignment. Productions running AI-assisted development pipelines without disclosure protocols are sitting on a grievance.

SAG-AFTRA’s AI Disclosure Framework: Consent, Compensation, and What’s Coming Next

SAG-AFTRA’s framework is more granular — and more consequential for production budgeting — because it governs not just disclosure but consent and compensation for specific AI applications. The 2023 TV/Theatrical Agreement defined two digital replica categories that matter for any production using AI on performer likenesses or performances.

An Employment-Based Digital Replica is a replica created with a performer’s physical participation during their contracted employment — think de-aging, digitally extending a performance, or generating additional footage. An Independently Created Digital Replica involves generating a performer’s likeness without them being present. Both require specific consent and both carry compensation obligations, but the Independently Created category requires more explicit agreement and carries tighter limitations on scope and duration. You can’t use a performer’s digital replica beyond what they explicitly consented to, for longer than they agreed to, or for purposes not described in the original agreement.

The enforcement is real. In May 2025, SAG-AFTRA filed an Unfair Labor Practice charge against Llama Productions — a Fortnite signatory — for replacing the actor voicing Darth Vader with AI without providing notice or bargaining with the union. That case established the pattern for how the union will pursue violations going forward: not post-hoc complaints, but proactive ULP charges at the point of non-disclosure. If you’re replacing or supplementing a performer’s voice with AI without the required notice and bargaining, expect the union to move fast. As we analyzed in our broader breakdown of Hollywood’s AI adoption framework in 2026, the pattern across guild enforcement is moving toward preemptive action rather than reactive arbitration.

And this is before the June 2026 renegotiation. Crabtree-Ireland has specifically flagged training restrictions — preventing studios from using SAG-AFTRA members’ recorded work to train AI systems — as a priority for the next TV/Theatrical cycle. The 2025 Commercials Contract already contains this restriction. Any production whose contracts don’t proactively address training use is likely to face retroactive renegotiation pressure, or to find that their AI workflow assumptions are directly contradicted by whatever deal lands in 2026.

Track How 140,000+ Production Companies Are Managing AI Disclosure Compliance

400,000+ projects monitored in real time. See which production partners have verified Authorized AI™ governance — before you sign the co-production agreement.

✓ 200 free credits | ✓ No credit card required | ✓ Cancel anytime

New York and the EU AI Act: The Regulatory Layer Coming for Every Distributor in 2026

The guild frameworks govern your production relationships. But two external regulatory regimes — one U.S. state-level, one European — are coming for your distribution footprint.

New York’s Synthetic Performer Law (Effective June 2026)

New York Governor Kathy Hochul signed legislation in December 2025 amending the state’s General Business Law to require explicit disclosure when AI-generated synthetic performers — digitally created human-like figures that don’t represent any specific real person — appear in advertisements. The law takes effect in June 2026. For productions with any New York advertising or commercial distribution component, this creates a disclosure obligation that runs parallel to, but distinct from, the SAG-AFTRA Commercials Contract. The two frameworks are aligned on intent but differ in specifics, and the White House’s December 2025 Executive Order seeking to preempt state AI laws adds further complexity — though the New York law is expected to survive federal challenge in the near term given SAG-AFTRA’s strong legislative support behind it.

And California’s AB 412 framework adds statewide disclosure standards for AI-generated content in entertainment — overlapping with both the guild agreements and New York’s regime for productions shooting in California. Productions operating across multiple U.S. states are now navigating three distinct but partially overlapping regulatory frameworks before the EU even enters the picture.

The EU AI Act: August 2026 and the Deepfake Taxonomy

If any of your distribution runs to European territories — and for any production with meaningful international sales, it will — the EU AI Act’s transparency requirements become enforceable in August 2026. Article 50 requires that any AI-generated or manipulated audio, image, or video content that constitutes a “deepfake” must be disclosed as artificially generated or manipulated. There’s an artistic exception: where content forms part of an evidently creative, fictional, or satirical work, disclosure can be made in a manner that doesn’t “hamper the display or enjoyment” of the work — meaning a credit sequence notice rather than an in-frame label.

But here’s what the artistic exception doesn’t resolve: the EU’s emerging AI content taxonomy distinguishes between “fully AI-generated content” and “AI-assisted content,” and that classification matters beyond just labeling. Under European copyright concepts, content labeled as fully AI-generated may carry limited copyright protection — meaning third parties could potentially reuse it freely. That’s a downstream rights and licensing risk that most production teams haven’t integrated into their chain-of-title analysis. A production that uses AI to generate establishing shots or background environments and discloses this under the EU AI Act may be inadvertently weakening its own IP position for those specific elements. As Deadline has reported in its coverage of the evolving guild-AI landscape, the industry is still working out how consent frameworks, copyright protection, and disclosure obligations interact — and the EU’s classification system adds another variable.

The EU’s Draft Code of Practice — with a final version expected by June 2026, just before the August enforcement date — is adding further specificity. It calls for a common icon for AI-generated and manipulated content, internal processes for identifying deepfake elements, and audit-ready compliance documentation that regulators can review. Film studios and streaming services are specifically named as entities facing “comprehensive requirements” for labeling systems that work across production and distribution pipelines.

Authorized AI™: The Governance Structure That Resolves the Compliance Stack

The practical problem isn’t that any single framework is impossible to comply with. It’s that you’re now simultaneously managing WGA disclosure obligations, SAG-AFTRA consent requirements, New York and California state law, EU distribution obligations, and completion bond underwriting questions — each of which defines “AI use” slightly differently and requires different documentation.

Authorized AI™ is the framework that rationalizes this stack. Rather than treating each regulatory obligation as a separate compliance silo, Authorized AI governance starts from the tool layer: only AI tools with verified, licensed training data enter the production pipeline. That single upstream discipline cascades into cleaner chain-of-title documentation, cleaner completion bond conversations, cleaner distribution deal negotiations, and cleaner guild compliance — because you’re not working backward from a finished product trying to reconstruct what AI touched and whether it was authorized.

The commercial example that established this framework at scale is Disney’s $1 billion partnership with OpenAI — a structured content licensing deal that gives OpenAI access to Disney’s IP under explicit terms, while giving Disney AI capabilities trained on authorized data rather than scraped content. That’s the authorized pipeline model applied at studio scale. As we broke down in our analysis of how Disney’s authorized AI deal reshapes Hollywood, the implication for independent producers isn’t that you need a billion-dollar licensing deal — it’s that you need to know which tools in your production stack are operating on authorized data, and which ones aren’t.

Productions using unauthorized AI tools — those trained on scraped intellectual property without explicit licensing — face a specific and serious risk: IP liability that surfaces during distribution deal negotiations and completion bond underwriting, typically at exactly the wrong moment. Completion bond insurers are actively developing AI disclosure requirements right now. Productions with documented Authorized AI governance — tool licensing verification, output chain-of-title tracking, guild compliance records — report materially smoother bond conversations. Those without documented governance are adding two to three weeks of friction at a critical financing moment, and sometimes discovering unbondable IP liability that restructures the entire deal.

The practical implementation steps break down across three production phases. During pre-production, you’re building your AI tool registry: which tools are in use, what their training data licensing covers, and how their output chains connect to your script and design elements. During production and post, you’re maintaining the usage log — scene by scene, sequence by sequence, tracking which elements were AI-generated, AI-assisted, or purely human-authored. And at delivery, you’re packaging your compliance documentation alongside the technical delivery requirements: guild notices, territory-specific disclosure materials, and chain-of-title records for AI-touched elements that can survive distribution due diligence. For a full operational breakdown of how AI frameworks affect production economics, see our detailed guide to AI production ROI and cost structures in 2026.

What Major Streamers and Distributors Are Actually Requiring in 2026

The formal regulatory frameworks tell part of the story. But acquisition agreements are where production teams typically get the first concrete signal of what platform standards actually look like in practice.

Netflix, Amazon MGM Studios, and Apple TV+ have all added AI content disclosure language to their acquisition and distribution agreements in 2025-2026 — requiring sellers to represent and warrant that AI-generated content in delivered productions has been disclosed in accordance with applicable guild agreements and that chain-of-title is clear for AI-assisted creative elements. The specific language varies by platform and by deal, but the underlying ask is consistent: tell us what AI touched, confirm you have the rights to it, and confirm the guild compliance is documented.

For productions targeting European platforms — including Canal+, Sky, and MENA distributors like OSN — the EU AI Act obligations are increasingly being baked into acquisition terms directly, ahead of the August 2026 enforcement date. Buyers don’t want to discover AI compliance gaps post-acquisition. They’re pushing the disclosure verification back to the production company at the contract stage. That shift means the cost of non-compliance isn’t just regulatory penalties — it’s deals falling through at closing when due diligence surfaces undisclosed AI usage that wasn’t properly documented or chain-of-title cleared.

As Variety reported in its coverage of the Academy’s evolving AI standards, even the awards eligibility dimension is now commercially consequential. A film that can’t demonstrate adequate AI disclosure documentation risks both Oscar ineligibility under forthcoming mandatory rules and distribution complications in territories where regulatory disclosure is legally required. The reputational and commercial overlap is now real.

Navigate AI Disclosure Requirements With Expert Guidance

Vitrina’s Concierge team maps guild compliance requirements, Authorized AI™ governance frameworks, and distribution disclosure obligations specifically for your production type and territory mix.

✓ Used by production teams at Netflix, Paramount, Apple TV+, and leading independents

✓ No commitment required to start

The Disclosure Stack Is Only Getting More Complex — Get Ahead of It Now

If you’re waiting for the regulatory landscape to stabilize before building your AI disclosure framework, you’re already behind. The June 2026 New York law, the August 2026 EU AI Act transparency enforcement, and the SAG-AFTRA TV/Theatrical renegotiation are all converging within the same six-month window. Productions currently in development will hit distribution and delivery right in the middle of that transition.

The good news is that Authorized AI™ governance isn’t as operationally complex as the regulatory stack makes it sound — once it’s designed properly from the production start rather than retrofitted after principal photography. The framework doesn’t require you to avoid AI tools. It requires you to use them deliberately: with documented licensing verification, with guild-compliant disclosure protocols, with chain-of-title tracking, and with territory-by-territory distribution disclosure planning built in from day one. That’s not a burden on creative workflow — it’s risk management that protects the financial value of the work you’re already investing in. For more on how AI is reshaping the entertainment supply chain across every production layer, our comprehensive guide to AI in the entertainment supply chain covers the full operational picture.

Key Takeaways

- AI disclosure in film production now involves at least five overlapping frameworks: WGA (2023 MBA), SAG-AFTRA (TV/Theatrical + Commercials Contracts), California AB 412, New York’s synthetic performer law (effective June 2026), and the EU AI Act (Article 50 transparency obligations, effective August 2026).

- SAG-AFTRA’s June 2026 TV/Theatrical renegotiation is the highest-stakes near-term event: Training restrictions — preventing studios from using members’ recorded work to train AI without union consent — are a declared priority after being achieved in the 2025 Commercials Contract.

- Completion bond underwriters are actively requiring AI disclosure documentation: Productions with documented Authorized AI™ governance report cleaner bond conversations and avoid the 2-3 week financing friction that undocumented AI usage creates.

- The EU’s “fully AI-generated” vs “AI-assisted” taxonomy carries copyright implications: Content classified as fully AI-generated may carry limited copyright protection under EU law — a downstream IP risk that production teams need to integrate into chain-of-title analysis now.

- Major streamers are pushing disclosure verification back to production companies at acquisition: Netflix, Amazon, and Apple TV+ acquisition agreements now include AI disclosure reps and warranties — discovering gaps at closing is a deal-stopper, not a negotiating point.

Frequently Asked Questions: AI Disclosure in Film Production 2026

What does AI disclosure in film production actually require in 2026?

AI disclosure requirements in film production now span multiple overlapping frameworks. The WGA’s 2023 MBA requires studios to disclose to writers when AI-generated content has been provided to them, and writers to get company consent before using AI tools themselves. SAG-AFTRA requires explicit informed consent and compensation for digital replica use — both employment-based and independently created. New York law (effective June 2026) requires disclosure of synthetic performers in advertising. The EU AI Act (effective August 2026) requires disclosure of deepfake or AI-manipulated audio-visual content in European distribution, with an artistic exception for clearly fictional works. The Academy of Motion Picture Arts and Sciences is moving toward mandatory AI disclosure for Oscar-eligible films.

What is Authorized AI™ and why does it matter for production?

Authorized AI™ is the framework for using AI tools trained on licensed, verified intellectual property rather than scraped or unauthorized content. It matters for production because AI-generated content with unclear chain-of-title creates IP liability that surfaces during distribution deal negotiations and completion bond underwriting — often at the worst possible moment in the financing cycle. Productions with documented Authorized AI governance report cleaner bond conversations and avoid the 2-3 week friction that undocumented AI usage creates. The Disney/OpenAI $1 billion licensing partnership is the most prominent example of this model at studio scale.

How does SAG-AFTRA’s AI framework affect production budgets?

SAG-AFTRA’s AI provisions directly affect budgets through consent and compensation requirements. Using an employment-based digital replica of a performer — AI-generated footage of an actor for scenes they didn’t actually shoot — requires separate consent and compensation beyond their contracted performance. Creating an independently created digital replica (without the performer present) carries even stricter requirements. Productions using AI to replicate or replace performer voices without prior union notice and bargaining risk Unfair Labor Practice charges — as demonstrated by SAG-AFTRA’s May 2025 action against Llama Productions for replacing a voice actor with AI for the Darth Vader character in Fortnite.

What does the EU AI Act require for films distributed in Europe?

The EU AI Act’s Article 50 transparency obligations become enforceable in August 2026. For film distribution in EU territories, AI-generated or AI-manipulated deepfake content must be disclosed as artificially generated or manipulated. There’s an artistic exception for clearly creative or fictional works — disclosure can be made in a way that doesn’t disrupt viewer enjoyment, such as a credit sequence notice. However, the EU’s emerging taxonomy distinguishing “fully AI-generated” from “AI-assisted” content has potential copyright implications: content classified as fully AI-generated may carry limited copyright protection, potentially enabling free reuse by third parties. Productions distributing in Europe should integrate EU disclosure requirements into their chain-of-title documentation from pre-production.

Are AI-assisted films eligible for Oscar nominations?

Yes — the Academy of Motion Picture Arts and Sciences ruled in April 2025 that AI-assisted films remain eligible for Oscar nominations and awards, provided human creative involvement remains central. However, the Academy is simultaneously moving toward mandatory AI disclosure requirements for the 2026 rules cycle, building on an optional disclosure form already in use. Films like The Brutalist (which used AI to modify Adrien Brody’s spoken Hungarian) and Here (which used AI de-aging for Tom Hanks and Robin Wright) triggered the Academy’s heightened attention to disclosure practices. Productions targeting awards consideration should build comprehensive AI usage documentation from production start.

How does AI disclosure affect completion bond underwriting?

Completion bond insurers are actively developing AI disclosure requirements as of 2026. Primary risk categories include IP liability from unauthorized AI training data (which can block distribution and void the bond), budget variance from AI tool underperformance, and chain-of-title complications in AI-generated content. Productions with documented Authorized AI™ governance — including tool licensing verification and output chain-of-title tracking — report materially smoother bond conversations. Undocumented AI usage can add 2-3 weeks of friction at a critical financing moment, and in some cases surfaces unbondable IP liability that requires restructuring the production’s financing.

What is New York’s synthetic performer disclosure law?

New York’s synthetic performer disclosure law, signed by Governor Kathy Hochul in December 2025 and effective June 2026, amends New York’s General Business Law to require explicit disclosure when AI-generated “synthetic performers” — digitally created human-like figures that don’t represent any specific real person — appear in advertisements. A White House Executive Order issued the same day seeks to preempt state AI laws in favor of a unified federal standard, but the New York law is expected to survive near-term given strong SAG-AFTRA backing and constitutional questions around preemption. Productions with any New York advertising or commercial distribution should prepare for compliance by June 2026.

How are streaming platforms handling AI disclosure in acquisition agreements?

Major streamers including Netflix, Amazon MGM Studios, and Apple TV+ have added AI content disclosure language to their acquisition and distribution agreements in 2025-2026. These provisions require sellers to represent and warrant that AI-generated content has been disclosed per applicable guild agreements, that chain-of-title is clear for AI-assisted elements, and that guild compliance is documented. European platforms are increasingly baking EU AI Act obligations directly into acquisition terms ahead of August 2026 enforcement. Discovering AI compliance gaps at closing is treated as a deal-stopper rather than a negotiating point — making pre-production AI documentation an acquisition prerequisite, not a delivery afterthought.

Get the AI Compliance Intelligence Your Production Actually Needs

140,000+ verified companies. 400,000+ projects tracked globally. Ask VIQI which production partners have documented Authorized AI™ governance — and which disclosure frameworks apply to your specific production and distribution footprint.

✓ 200 free credits | ✓ No credit card required | ✓ Full platform access from day one